Your developers are producing more code than ever. PR volumes are up. Commit graphs look impressive. And yet the roadmap is still slipping.

That is the defining leadership challenge of 2026: the gap between what looks productive and what actually is.

Research across more than 10,000 developers and 1,255 teams points to the same pattern. Developers on high-AI-adoption teams complete more tasks and merge more pull requests, but PR review time rises sharply and the system bottleneck moves downstream to human approval. Individual output goes up. System-level delivery often does not.

This is not just a tooling problem. It is a measurement problem.

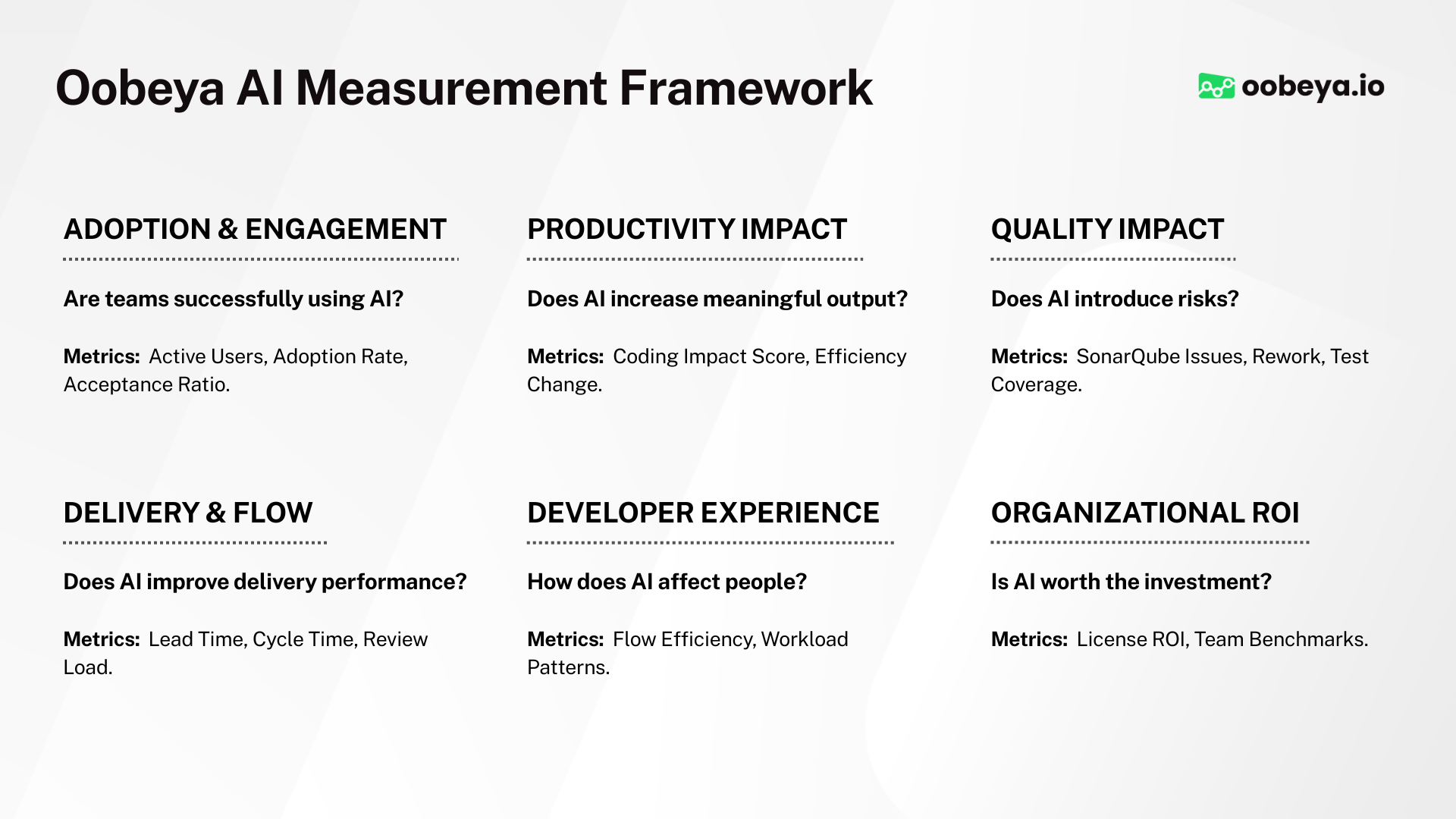

If your organization wants a practical model for measuring AI-assisted development directly, start with AI Impact and our guide to How to Measure AI-Assisted Software Development.

Table of Contents

- Why AI Breaks Traditional Metrics

- The Four-Stage AI Adoption Timeline

- DORA Metrics: Still Essential, But Not Enough

- SPACE Framework: The Human Layer

- DevEx and DX Core 4

- The Five AI-Specific Metrics Every Team Needs

- Building Your AI-Era Dashboard

- The 40-20-40 Rule

- Connecting Metrics to Business Outcomes

- Common Measurement Mistakes

- Frequently Asked Questions

Why AI Breaks Traditional Metrics

Think of software delivery as a factory. The total output of the factory is not determined by the fastest station, but by the slowest one.

For years, coding was the main constraint. AI changed that. Code generation accelerated, and the constraint shifted to code review, integration, testing, security scanning, and deployment approval.

If you speed up one machine on an assembly line without fixing the others, you do not get a faster factory. You get a pile-up.

That is why traditional output metrics are now risky:

Lines of coderewards verbosity instead of clarityCommit countencourages activity instead of meaningful changePR volumecan rise even while delivery slows downStory points velocityis easy to game and hard to compare

In the AI era, these metrics are easier than ever to inflate and harder than ever to trust.

The old job was writing, reviewing, and deploying code. The new job increasingly includes orchestrating AI tools, steering multiple parallel workstreams, reviewing generated output, and making the judgment calls AI still cannot make. Your metrics need to reflect that shift.

The Four-Stage AI Adoption Timeline

One of the most common patterns in AI-augmented engineering teams is a four-stage adoption curve.

1. The Enthusiasm Phase

In the first few weeks, developer sentiment is high. PR counts rise. Individuals report feeling faster. This is the phase where many leaders conclude AI is already delivering ROI.

2. The Review Queue Crisis

By month two, senior engineers are spending far more time reviewing AI-generated pull requests. Review queues back up. Merge times slow. What looked like a productivity win starts to create downstream pressure.

3. The Quality Debt Phase

By month three, quality issues begin to emerge. Code may pass CI, yet fail in edge cases, introduce churn, or increase rework. Delivery looks fast on the surface but becomes expensive in maintenance and review load.

4. The Delivery Paradox

By month four, teams often discover that lead time is not improving the way they expected. Work is stuck in review, rework, and incident response. This is where disciplined measurement becomes essential.

The most common leadership mistake is judging AI in phase one using only phase-one signals.

DORA Metrics: Still Essential, But Not Enough

DORA metrics remain one of the strongest baselines for engineering performance.

They still matter:

Deployment FrequencyLead Time for ChangesChange Failure RateMean Time to Restore

But in the AI era, DORA alone is not enough.

AI can increase code output without improving delivery flow. That means leaders need to read DORA with more context:

- review pressure

- AI-assisted PR volume

- code quality and rework

- incident and failure patterns

- team-level ownership and workload

If PR volume is up but lead time is flat, the bottleneck has moved. If deployment frequency rises while change failure rate also rises, quality gates are not keeping up. DORA still tells the truth, but only when paired with the right surrounding signals.

If you want a more comparative lens, see Engineering Benchmarks and Symptoms as complementary ways to diagnose what DORA is not telling you directly.

SPACE Framework: The Human Layer

DORA tells you how the delivery system performs. SPACE helps explain what the team is experiencing while that system runs.

SPACE includes:

- Satisfaction and well-being

- Performance

- Activity

- Communication and collaboration

- Efficiency and flow

This matters because AI can increase visible activity while damaging deeper team health. A team may merge more PRs yet feel less confident, less focused, and more overloaded.

That is why AI-era measurement needs both:

- delivery metrics for the system

- experience metrics for the people inside the system

DevEx and DX Core 4

Developer Experience and DX Core 4 extend the same idea further.

They help teams measure:

- cognitive load

- feedback loop quality

- flow state disruption

- friction across the developer journey

This is especially important in AI-assisted environments. AI can save time at the moment of generation while increasing cognitive overhead later through larger reviews, unfamiliar patterns, or harder-to-maintain code.

The practical lesson is simple: if AI makes output easier but ownership, review quality, and confidence worse, the organization is not actually improving.

The Five AI-Specific Metrics Every Team Needs

Standard frameworks were not built for a world where AI writes a large share of code. Teams now need an explicit AI measurement layer.

1. AI Code Share

How much merged code was AI-assisted or AI-generated?

This becomes the base layer for segmenting every other metric.

2. AI vs. Human PR Cycle Time

Compare cycle time between AI-assisted and human-authored pull requests.

If AI-generated PRs are slower to review, you have found a real bottleneck.

3. AI Code Churn Rate

How much AI-generated code is rewritten or deleted within 30 days?

High churn is often a leading indicator of quality debt.

4. AI Suggestion Acceptance Rate

This shows whether developers still trust the tool and whether the suggestions remain relevant over time.

The trend matters more than the raw number.

5. PR Review Load per Senior Engineer

This is one of the most important AI-era metrics.

If output rises but review burden concentrates on a few senior engineers, the organization may be moving toward burnout, slower approvals, and lower review quality.

If your team wants a more productized way to track these signals, explore AI Impact and the dedicated pages for GitHub Copilot, Cursor, and Claude.

Building Your AI-Era Dashboard

The most useful engineering dashboard in 2026 is layered.

Tier 1: Weekly System Health

Use leading indicators to catch problems early:

- PR cycle time segmented by AI-assisted vs. human-authored work

- review load per senior engineer

- AI code share

- main branch build success rate

- work in progress

Tier 2: Monthly Delivery Performance

Use DORA outcomes to evaluate delivery over complete cycles:

- deployment frequency

- lead time for changes

- change failure rate

- mean time to restore

- post-release defect rate segmented by AI involvement

Tier 3: Quarterly Team and Business Health

Translate engineering performance into leadership language:

- developer satisfaction

- engineering eNPS

- roadmap delivery ratio

- revenue or value impact per engineer

- AI ROI against measurable delivery outcomes

In practice, the strongest dashboards combine DORA, AI Impact, AI Insights, and benchmarking in one shared operating model.

The 40-20-40 Rule

One of the most useful diagnostic ratios for sustainable engineering is the 40-20-40 rule:

- 40% new feature work

- 20% rework

- 40% maintenance and operational work

This matters more in the AI era because AI accelerates all three buckets at once.

It can increase feature output, but it can also increase rework and maintenance if quality controls are weak. If new feature work rises while change failure rate also rises, the team may be generating debt faster than the system can absorb it.

Track this ratio quarterly. It can reveal unhealthy delivery patterns long before they become obvious in top-line output charts.

Connecting Metrics to Business Outcomes

Engineering metrics matter most when they can be translated into business language.

Two examples:

Lead Time for Changes

If lead time drops from three weeks to one week, features reach users faster. That means earlier feedback, earlier revenue realization, and faster competitive response.

Change Failure Rate

If your change failure rate drops from 10% to 5% across a large deployment volume, the incident cost savings can be substantial. This creates a direct line from engineering quality to business impact.

In the AI era, you also need an AI line item:

- cost of AI tools

- measurable effect on lead time

- measurable effect on quality and failure rate

- measurable effect on team sustainability

That is the basis of real AI ROI.

Common Measurement Mistakes

1. Measuring AI at the Tool Level Only

Tool dashboards show usage. They do not show whether delivery actually improved.

2. Drawing Conclusions Too Early

The first 4-8 weeks of adoption are noisy. Short-term enthusiasm is not the same as system-level improvement.

3. Overweighting Individual Metrics

The shift is from individual productivity to system efficiency. Outcome metrics matter more than activity metrics.

4. Skipping the Pre-AI Baseline

Without baseline measurements, attribution becomes guesswork.

5. Treating Adoption as Success

High adoption without better delivery, quality, or developer experience is not success.

Frequently Asked Questions

What are the most important engineering metrics in the AI era?

The most important set combines delivery flow metrics, AI-specific signals, and developer experience metrics. That typically means DORA, AI code share, PR cycle time segmented by AI involvement, code churn, review load, and team experience signals such as satisfaction or cognitive load.

Why do traditional engineering KPIs fail when teams adopt AI?

Because they were designed to approximate human effort. AI breaks that assumption by inflating output metrics like code volume and PR count without guaranteeing faster or better delivery.

What is the AI productivity paradox?

It is the gap between individual output gains and organizational delivery outcomes. AI can make individuals faster while making the system slower if bottlenecks in review, testing, or integration are left untouched.

How should DORA, SPACE, and DevEx work together?

Use DORA for system delivery performance, SPACE for team health, and DevEx for friction diagnosis. Together they help teams avoid optimizing one dimension at the expense of another.

Why does the 40-20-40 rule matter in the AI era?

Because AI can increase feature velocity and rework at the same time. Tracking the balance between new work, rework, and maintenance helps leaders spot unsustainable acceleration early.

Conclusion

Engineering metrics in the AI era require a shift in philosophy: from counting what developers produce to measuring how value flows through the full delivery system.

DORA gives you the delivery baseline. SPACE gives you the human signal. DevEx gives you friction diagnostics. AI-specific metrics give you attribution.

Together, these layers create a more honest measurement model for 2026.

The strongest teams will not be the ones with the highest PR volume or the most exciting AI adoption dashboards. They will be the ones that establish baselines, pair speed with quality guardrails, monitor review bottlenecks, and translate engineering performance into decisions leadership can trust.

If you want to operationalize this in practice, start with:

DORA and Flow Metrics Field Guide

Connect DORA with flow, quality, and planning signals to turn metric trends into practical improvement actions.

Get new engineering intelligence insights by email

If this topic is relevant to your team, submit your email to get practical updates on DORA, AI-assisted development, developer productivity, and SDLC visibility.

Continue Exploring

Written by Emre Dundar

Emre Dundar is the Co-Founder & Chief Product Officer of Oobeya. Before starting Oobeya, he worked as a DevOps and Release Manager at Isbank and Ericsson. He later transitioned to consulting, focusing on SDLC, DevOps, and code quality. Since 2018, he has been dedicated to building Oobeya, helping engineering leaders improve productivity and quality.