Your DORA metrics look fine. Deployment frequency is up. Lead time is down. And yet something still feels off. Your roadmap is slipping, senior engineers are drowning in review work, and it is still unclear whether your AI investment is truly improving delivery.

You are not imagining it. DORA metrics were never designed to answer those questions by themselves.

The 2025 DORA State of AI-assisted Software Development report, based on nearly 5,000 technology professionals, reinforced what many engineering leaders already feel in practice: software delivery metrics alone are no longer sufficient. They tell you what is happening, but not why it is happening.

That gap is what this article is about. We will walk through what DORA can and cannot tell you in 2026, what elite teams track beyond it, and how to build a measurement system that supports decisions instead of just dashboards.

Table of Contents

- What DORA Metrics Are and What They Were Designed to Do

- The Three Blind Spots DORA Cannot See

- What the 2025 DORA Report Changed

- What Elite Engineering Teams Track Beyond DORA

- The DX Core 4 Framework

- The AI Attribution Problem

- Five AI-Specific Metrics DORA Ignores

- How to Build a Complete Engineering Intelligence Stack

- Connecting Metrics to Business Outcomes

- Frequently Asked Questions

What DORA Metrics Are and What They Were Designed to Do

The DORA framework gave software engineering something it badly needed: a shared and evidence-based language for delivery performance.

Its four original metrics still matter:

Deployment FrequencyLead Time for ChangesChange Failure RateMean Time to Recovery

In 2025, the DORA team added a fifth metric: Rework Rate, which helps show how much engineering activity is reactive instead of planned. At the same time, Mean Time to Recovery was reframed as Failed Deployment Recovery Time and repositioned within the model.

These metrics are still useful. The problem is not that DORA is wrong. The problem is that DORA is incomplete.

The Three Blind Spots DORA Cannot See

1. DORA tells you what, not why

DORA is excellent at showing outcomes. It is not built to explain causes.

If your change failure rate rises, DORA will show the trend. It will not tell you whether the issue is PR review delay, pipeline fragility, AI-generated code quality, overloaded teams, or a specific codebase.

DORA starts the conversation. It does not finish it.

2. DORA ignores the people inside the system

DORA measures the delivery pipeline. It does not tell you how the developers inside that pipeline are experiencing the work.

It cannot show:

- review overload on senior engineers

- trust erosion in AI-generated code

- rising cognitive load

- low collaboration quality

- burnout hidden behind acceptable delivery numbers

That is why strong delivery numbers can coexist with weak developer experience.

3. DORA cannot see AI's real impact

This is the most important blind spot in 2026.

A team can improve deployment frequency because AI generates more code faster, while change failure rate worsens because the code is harder to review or maintain. DORA captures the output but not the source. It does not know whether code was AI-assisted or human-authored.

That is the attribution gap. And without attribution, AI-era decisions get much harder.

If your team is actively measuring this layer, explore AI Impact and our guide to How to Measure AI-Assisted Software Development.

What the 2025 DORA Report Changed

The 2025 DORA report introduced two major shifts that matter directly for engineering leaders.

From four tiers to seven archetypes

Instead of simple performance tiers, the report introduced seven team archetypes that combine delivery, stability, and well-being patterns.

This matters because two teams can show similar DORA scores for completely different reasons. One may be genuinely healthy and well balanced. Another may be performing under unsustainable pressure. The dashboard alone does not reveal that.

The DORA AI Capabilities Model

The report also introduced a seven-part AI capabilities model:

- Clear and communicated AI stance

- Healthy data ecosystems

- AI-accessible internal data

- Strong version control practices

- User-centric focus

- Robust feedback loops

- AI governance

The core insight is simple: AI is an amplifier, not a fixer. Strong systems get stronger. Weak systems become more visibly unstable.

What Elite Engineering Teams Track Beyond DORA

Top-performing teams in 2026 do not discard DORA. They layer it with three additional dimensions:

- developer experience

- AI-specific attribution

- business outcome alignment

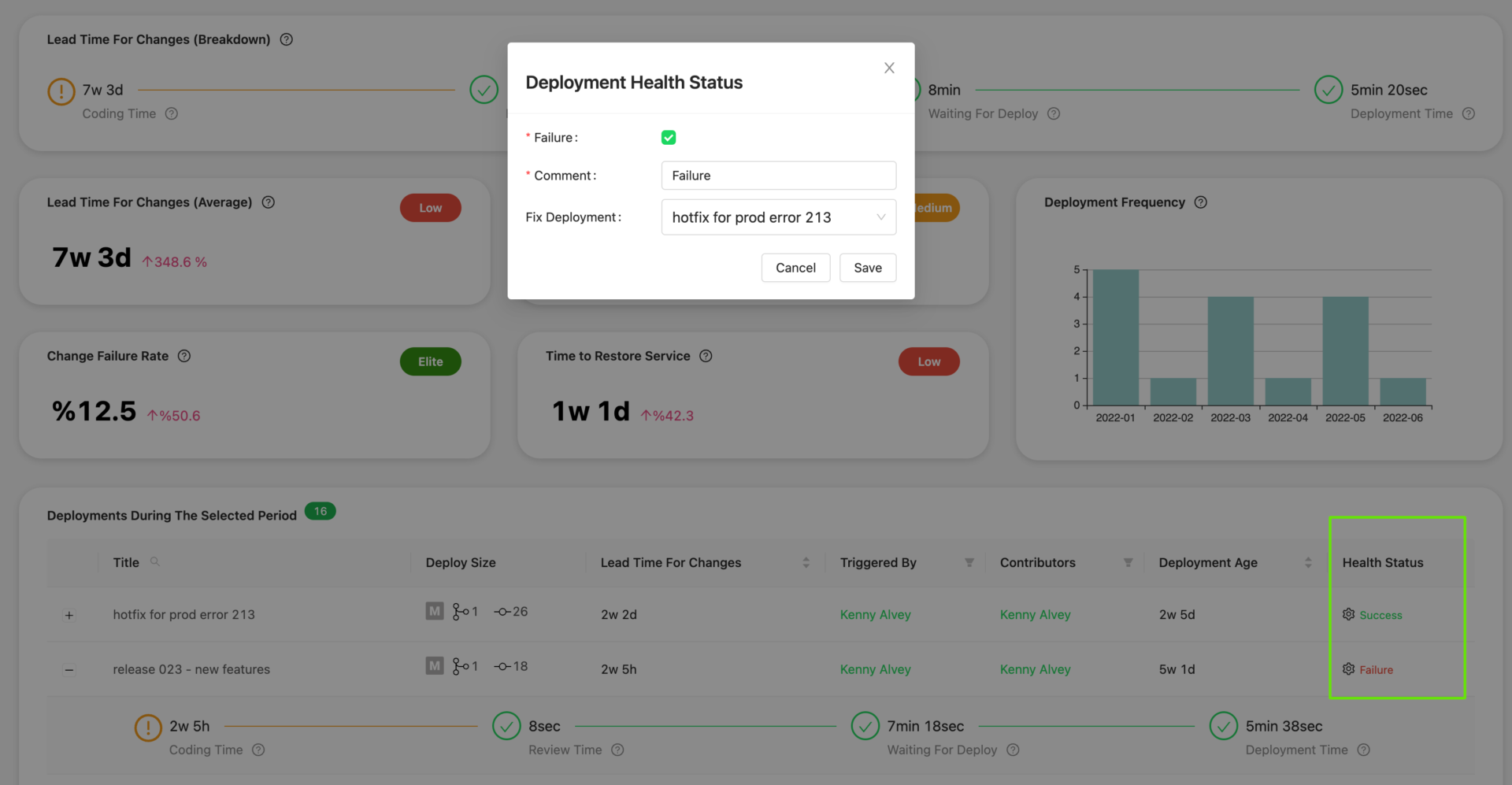

Delivery lifecycle depth

Elite teams break lead time into more actionable components:

- coding time

- pickup time

- review time

- deploy time

This helps them identify exactly where the bottleneck moved.

Developer workflow signals

Elite teams measure the human side of delivery too:

- satisfaction and well-being

- cognitive load

- trust in tools

- workflow friction

- review burden

This is where Engineering Metrics in the AI Era becomes relevant. Output can rise while flow gets worse.

Business alignment

The strongest teams also translate engineering metrics into executive language:

- revenue or value per engineer

- roadmap delivery ratio

- maintenance vs. new capability time

- incident cost

- time-to-market impact

This is where Engineering Benchmarks and Symptoms become useful complements to DORA.

The DX Core 4 Framework

One of the most useful post-DORA measurement models is DX Core 4, developed with input from several of the same thinkers behind DORA, SPACE, and DevEx research.

It organizes measurement into four balancing zones:

SpeedEffectivenessQualityBusiness Impact

The power of this model is that it resists metric gaming.

A team can inflate speed by merging more AI-generated PRs. It cannot simultaneously inflate effectiveness if review pressure, developer satisfaction, and trust are falling. That makes DX Core 4 especially relevant in AI-heavy delivery environments.

The AI Attribution Problem

AI adoption often creates a confusing pattern:

- throughput rises

- delivery does not improve as much as expected

- review load increases

- instability can rise alongside output

This is where DORA becomes difficult to interpret without segmentation.

If deployment frequency goes up but change failure rate also increases, you still do not know whether AI is helping or hurting unless you can separate AI-assisted work from human-authored work.

That is why engineering intelligence in 2026 increasingly requires attribution layers, not just aggregate delivery dashboards.

Five AI-Specific Metrics DORA Ignores

To close that gap, elite teams now track an explicit AI measurement layer.

1. AI code share

What percentage of merged code was AI-assisted or AI-generated?

This is the segmentation layer that makes the rest of the analysis possible.

2. AI vs. human PR cycle time

If AI-assisted PRs take longer to review, you have identified a likely delivery bottleneck.

3. AI code churn rate

How much recently written AI-assisted code is deleted or rewritten?

High churn is often a hidden quality signal.

4. AI suggestion acceptance trend

Acceptance rate matters, but its direction over time matters more. A declining trend often indicates trust or relevance problems.

5. PR review load per senior engineer

This is one of the strongest leading indicators of future friction, burnout, and review degradation in AI-assisted environments.

If you want this in a more operational product view, look at the dedicated pages for GitHub Copilot, Cursor, and Claude.

How to Build a Complete Engineering Intelligence Stack

The most practical stack in 2026 is layered.

Layer 1: DORA baseline

Start with consistent DORA definitions across teams and collect enough historical data to establish a reliable baseline.

Layer 2: Developer experience

Add a lightweight monthly pulse on:

- perceived productivity

- workflow friction

- cognitive load

- trust in AI output

- review load

Layer 3: AI attribution

Instrument:

- AI code share

- AI vs. human PR cycle time

- code churn segmented by AI involvement

Layer 4: Business alignment

Translate delivery and quality metrics into business language:

- time-to-market

- downtime cost

- support burden

- R&D allocation

- AI ROI

The goal is not a bigger dashboard. The goal is a system that tells leadership what is happening, why it is happening, and what should change next.

Connecting Metrics to Business Outcomes

Most engineering leaders can explain their DORA scores. Fewer can explain what those numbers mean in commercial terms.

A more useful executive translation looks like this:

Deployment Frequency-> time-to-market advantageChange Failure Rate-> incident cost and release riskRecovery Time-> operational impact and support loadAI investment-> measurable delivery and quality delta

Once metrics are framed this way, engineering discussions become easier to connect to portfolio, board, and finance conversations.

Frequently Asked Questions

Are DORA metrics still relevant in 2026?

Yes. They are still the strongest common baseline for delivery performance. They are just no longer enough on their own.

What changed in the 2025 DORA report?

The report moved beyond simple linear tiers, introduced seven team archetypes, and added the DORA AI Capabilities Model as a framework for understanding whether AI is stabilizing or destabilizing delivery.

What do elite engineering teams track beyond DORA?

They add developer experience metrics, AI-specific attribution signals, and business outcome alignment on top of their DORA baseline.

What is DX Core 4?

It is a balancing model built around Speed, Effectiveness, Quality, and Business Impact. Its value is that it helps organizations avoid optimizing one dimension at the expense of the others.

Why does DORA struggle with AI?

Because DORA is outcome-focused, not attribution-focused. It does not know whether the code being measured was AI-assisted or human-authored.

Conclusion

DORA gave software engineering its first rigorously useful delivery framework. It still matters. But in a world where AI is accelerating output, shifting bottlenecks, and complicating review and quality patterns, DORA by itself is no longer the whole answer.

The strongest teams in 2026 build engineering intelligence stacks:

- DORA as the delivery foundation

- developer experience as the human layer

- AI attribution as the accountability layer

- business alignment as the executive layer

Together, those layers answer not only what is happening, but why it is happening, who is affected, whether AI is helping, and what it means for the business.

If you are still relying on DORA alone, the next useful step is not a bigger dashboard. It is a better measurement model.

DORA and Flow Metrics Field Guide

Use this practical guide to connect DORA outcomes with flow, quality, and planning signals your teams can act on.

Get new engineering intelligence insights by email

If this topic is relevant to your team, submit your email to get practical updates on DORA, AI-assisted development, developer productivity, and SDLC visibility.

Continue Exploring

Written by Sukru Cakmak

Sukru Cakmak is the Co-Founder & CTO of Oobeya. He works closely on the platform's technical direction, engineering intelligence capabilities, and the practical challenges of measuring software delivery, developer productivity, and AI-assisted development across modern SDLC environments.